Resources

Expert insights and strategies on raising capital, attracting customers, and leveraging B2B content for emerging tech.

The Power of Sophisticated LLMs for Founding Teams

The Power of Sophisticated LLMs for Founding Teams

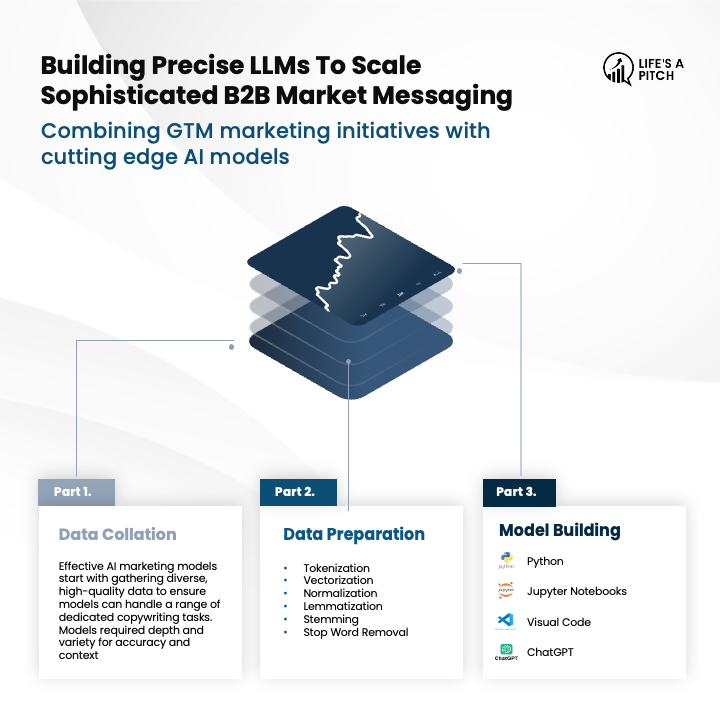

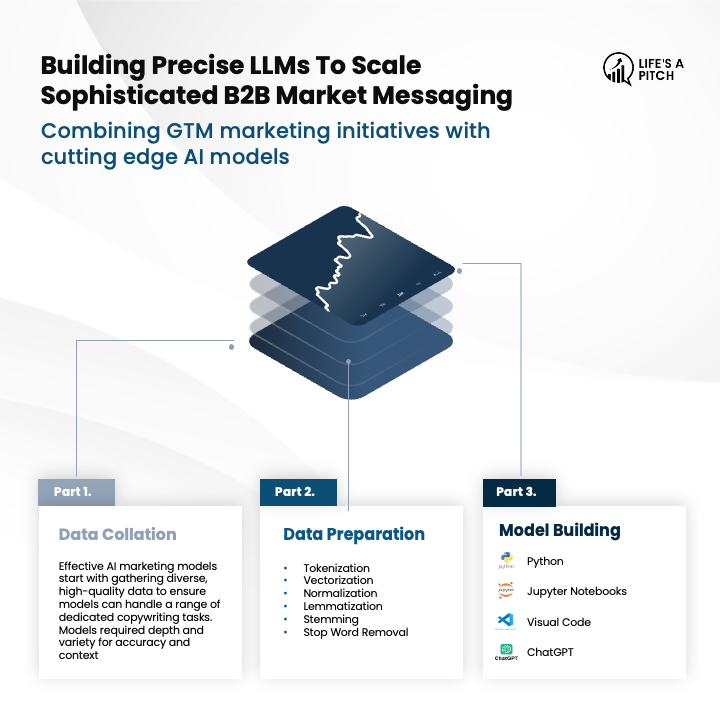

Emerging B2B technology can be difficult to position and explain (not to mention creating clear & impactful messages at scale!).

Here's how we are building sophisticated LLMs for founding teams with speed, performance, and precision.

Step 1: Data Collation

Effective AI messaging models start with gathering diverse, high-quality data. This ensures your models have a rich data set to handle various copywriting tasks for all types of channel requirements. The essential sources are:

External Data Sources: Tools like Appify for data scraping, and Crunchbase/PitchBook for industry-specific sector data.

Industry Reports: Use up-to-date market research and news articles for specific industry terminology that resonates.

Writing Samples: Maintain consistency of tone and preferred phrasing with examples of your team’s writing style.

Public-facing Materials: Website content, case studies, marketing decks/content industry insights, and articles to capture your company's voice.

Step 2: Data Preparation

It’s important to optimize and structure your data for accuracy and reliability through Python scripts:

Install Essential Tools: Jupyter Notebooks and Visual Studio Code for code build and data processing workflows.

Create Scripts: Copilot and ChatGPT can create various scripts for a multitude of file formats to provide LLM-friendly and enriched JSON outputs.

Implement Key Processing Techniques:

Tokenization: Break text into individual tokens for speed.

Vectorization: Convert text into numerical vectors for understanding.

Normalization: Standardize text by converting to lowercase and removing punctuation.

Stemming and Lemmatization: Reduce words to their base or dictionary form.

Stop Word Removal: Eliminate common, low-value words.

Step 3: Model Building & Fine-Tuning

Load prepared data and refine models for optimal performance:

Load Data into Models: Use ChatGPT's custom GPT capabilities to integrate enriched JSON data.

Train the Model: Select and train the appropriate architecture. The best teams understand they need a multitude of models to enhance their understanding of the market and communicate the value of their solutions clearly.

Fine-Tune Instructions: Use specific instructions and iterative testing to refine the model's outputs.

WANT TO LEARN MORE?

We are revolutionizing the way messages and stories are being told in the B2B emerging tech landscape. This is part of our FREE 5-part series, which provides the most comprehensive deep dive into how emerging technology companies can rapidly, efficiently, and effectively scale their B2B marketing message.

Click here to access

Life's A Pitch is a leading business consulting agency. We’re experts in securing financial capital and building impactful sales tools and strategy for your business.

Blog

Navigating the path to commercial success is a challenging journey.

We make it easy by integrating all aspects of growth, freeing you to focus on what you do best

The Power of Sophisticated LLMs for Founding Teams

The Power of Sophisticated LLMs for Founding Teams

Emerging B2B technology can be difficult to position and explain (not to mention creating clear & impactful messages at scale!).

Here's how we are building sophisticated LLMs for founding teams with speed, performance, and precision.

Step 1: Data Collation

Effective AI messaging models start with gathering diverse, high-quality data. This ensures your models have a rich data set to handle various copywriting tasks for all types of channel requirements. The essential sources are:

External Data Sources: Tools like Appify for data scraping, and Crunchbase/PitchBook for industry-specific sector data.

Industry Reports: Use up-to-date market research and news articles for specific industry terminology that resonates.

Writing Samples: Maintain consistency of tone and preferred phrasing with examples of your team’s writing style.

Public-facing Materials: Website content, case studies, marketing decks/content industry insights, and articles to capture your company's voice.

Step 2: Data Preparation

It’s important to optimize and structure your data for accuracy and reliability through Python scripts:

Install Essential Tools: Jupyter Notebooks and Visual Studio Code for code build and data processing workflows.

Create Scripts: Copilot and ChatGPT can create various scripts for a multitude of file formats to provide LLM-friendly and enriched JSON outputs.

Implement Key Processing Techniques:

Tokenization: Break text into individual tokens for speed.

Vectorization: Convert text into numerical vectors for understanding.

Normalization: Standardize text by converting to lowercase and removing punctuation.

Stemming and Lemmatization: Reduce words to their base or dictionary form.

Stop Word Removal: Eliminate common, low-value words.

Step 3: Model Building & Fine-Tuning

Load prepared data and refine models for optimal performance:

Load Data into Models: Use ChatGPT's custom GPT capabilities to integrate enriched JSON data.

Train the Model: Select and train the appropriate architecture. The best teams understand they need a multitude of models to enhance their understanding of the market and communicate the value of their solutions clearly.

Fine-Tune Instructions: Use specific instructions and iterative testing to refine the model's outputs.

WANT TO LEARN MORE?

We are revolutionizing the way messages and stories are being told in the B2B emerging tech landscape. This is part of our FREE 5-part series, which provides the most comprehensive deep dive into how emerging technology companies can rapidly, efficiently, and effectively scale their B2B marketing message.

Click here to access